When conducting research that compares two separate groups, one of the most fundamental statistical tools at your disposal is the independent sample t-test. This test enables you to assess whether two unrelated groups differ significantly in terms of their mean values on a continuous variable. Whether you’re comparing exam scores between two teaching methods, salaries between genders, or treatment outcomes between different patient groups, the independent t-test is often the first step in your inferential analysis.

In this comprehensive blog post, we’ll explore what the independent sample t-test is, when and how to use it, the assumptions underlying it, how to compute and interpret the test, as well as practical examples, software implementation tips, and answers to common questions.

What Is an Independent Sample t-Test?

The independent sample t-test also called the two-sample t-test or unpaired t-test is a statistical method used to compare the means of two unrelated or independent groups to determine if there is a statistically significant difference between them. The groups involved are distinct the participants in one group do not overlap with those in the other group.

Key characteristics:

- You have two groups which are unrelated (independent).

- You measure a continuous dependent variable on each group (e.g., test scores, height, weight).

- You want to determine if the differences between the groups’ means are likely to be genuine or due to random sampling variability.

When Should You Use an Independent Sample t-Test?

Common research situations where this test is appropriate include:

- Comparing test scores between two classes taught by different instructors.

- Comparing average salaries between males and females.

- Comparing clinical outcomes between patients receiving two different treatments.

- Any scenario where you have exactly two independent groups and a numeric outcome.

You cannot use this test if:

- The groups are related or paired (use paired sample t-test instead).

- The dependent variable is categorical.

- There are more than two groups involved in your analysis (consider ANOVA).

Hypotheses for the Independent Sample t-Test

Before running the test, formulate:

- Null hypothesis (H₀): The population means for the two groups are equal. H0: μ1=μ2

- Alternative hypothesis (H₁): The population means for the two groups are not equal (two-tailed), or one mean is greater or smaller than the other (one-tailed). H1: μ1≠μ2 (two-tailed)

The goal is to statistically evaluate whether the difference between the sample means reflects a true difference in the populations.

Assumptions of the Independent Sample t-Test

To trust the results, certain assumptions must be met:

- Independence: The observations in each group must be independent. One participant should not be in both groups.

- Normality: The dependent variable should be approximately normally distributed within each group. For moderate to large sample sizes (n>30), this assumption is less strict due to the Central Limit Theorem.

- Homogeneity of Variances (Equal variances): The variances of the dependent variable in the two groups should be approximately equal. This can be checked using tests such as Levene’s test. If this assumption is violated, a variation called Welch’s t-test can be used.

- Measurement level: The dependent variable should be continuous (interval or ratio scale).

Failure to meet these assumptions requires alternative approaches such as nonparametric tests (e.g., Mann-Whitney U test) or adjustments.

Formula and Calculation of the Independent Sample t-Test

The independent t-test compares the difference between the two group means in relation to the variability within the groups. The general formula is:

Where:

- X_1 bar and X_2 bar are the sample means of groups 1 and 2.

- SE is the standard error of the difference between means.

For equal variances assumed, the standard error is calculated as:

Where:

For unequal variances (Welch’s t-test), SE and degrees of freedom are calculated differently, adjusting for variance differences.

The degrees of freedom for equal variances: df = n1+n2−2

Step-by-Step Guide to Conducting the Test

- Collect data: Obtain independent samples from two groups.

- Calculate descriptive statistics: Find means, variances, and sample sizes.

- Check assumptions: Test for normality and equal variances.

- Choose appropriate test: Use standard t-test if variances are equal; Welch’s t-test if not.

- Calculate the t-statistic using the formulas above.

- Determine degrees of freedom based on the method.

- Find the p-value corresponding to the calculated t and degrees of freedom.

- Make a conclusion:

- If p-value < significance level (commonly 0.05), reject the null hypothesis — evidence suggests the groups differ.

- Otherwise, fail to reject the null hypothesis — no sufficient evidence of a difference.

Example: Salary Comparison Between Two Groups

Suppose you want to test if average salaries differ between males and females in a company.

| Group | Sample Size | Mean Salary ($) | Standard Deviation ($) |

|---|---|---|---|

| Males | 30 | 55,000 | 8,000 |

| Females | 25 | 50,000 | 7,500 |

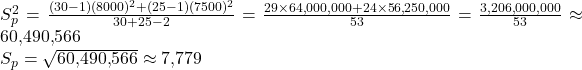

Step 1: Calculate Pooled Variance

Step 2: Calculate Standard Error (SE)

Step 3: Calculate t-Statistic

Step 4: Degrees of Freedom

df = 30+25−2=53

Step 5: Find p-value and Conclusion

Using a t-table or calculator, for df = 53, a t-value of 2.37 corresponds to a two-tailed p-value approximately 0.021.

At significance level 0.05, p < 0.05 means we reject the null hypothesis.

Interpretation: There is significant evidence that males and females have different average salaries in this company.

Tests for Equality of Variances: Levene’s Test

Before applying the standard independent t-test assuming equal variances, it is important to test if this assumption holds.

- Levene’s test checks the null hypothesis that population variances are equal.

- If Levene’s test is significant (p < 0.05), variances are unequal — use Welch’s t-test.

- If not significant, equal variance assumption holds.

Most software packages provide Levene’s test alongside the independent t-test.

Running Independent t-Test in Statistical Software

SPSS

- Go to Analyze > Compare Means > Independent-Samples T Test.

- Specify your grouping variable and the test variable.

- The output reports t-statistic, degrees of freedom, p-value, and Levene’s test.

R

rt.test(group1_data, group2_data, var.equal = TRUE) # for equal variances

t.test(group1_data, group2_data) # defaults to Welch's test

Python (scipy)

pythonfrom scipy import stats

# For unequal variances (Welch's)

t_stat, p_val = stats.ttest_ind(group1, group2, equal_var=False)

# For equal variances

t_stat, p_val = stats.ttest_ind(group1, group2, equal_var=True)

Interpretation and Reporting

When reporting, present:

- Descriptive statistics for both groups (mean, SD).

- t-statistic value, degrees of freedom, and p-value.

- Statement indicating if the difference was significant or not.

- Confidence interval for the difference in means (optional but recommended).

Example Report:

An independent samples t-test was conducted to compare salaries between males (M = $55,000, SD = $8,000) and females (M = $50,000, SD = $7,500). There was a significant difference in salaries (t(53) = 2.37, p = 0.021), indicating males earn more on average than females in this sample.

Advantages of the Independent Sample t-Test

- Simple and widely understood.

- Useful for comparing two groups on a continuous outcome.

- Well supported in statistical software.

- Can adjust for unequal variances using Welch’s test.

Limitations and Considerations

- Only compares exactly two groups.

- Sensitive to violations of assumptions, particularly normality and equal variances.

- Outliers can influence the mean and variance considerably.

- Doesn’t inform about practical significance alone — effect sizes and confidence intervals are important.

Conclusion

The independent sample t-test is a robust and accessible statistical technique for comparing the means of two separate groups and assessing whether observed differences are likely to reflect true population differences. Its clear hypotheses, straightforward assumptions, and wide software support make it an indispensable tool for researchers across fields—from social sciences to business to healthcare.

Understanding the underlying assumptions and calculation methods helps avoid misapplication, while interpretation of test statistics and p-values enables sound decision-making. Importantly, complementing the test with effect sizes and confidence intervals enriches insights by contextualizing practical significance.

Whenever you want to evaluate if two groups differ on a continuous outcome, the independent t-test is your go-to method—provided you heed its guidance and check its assumptions.

If you have any further questions or require clarity on using or interpreting the independent t-test, feel free to reach out! Data Science Blog

Frequently Asked Questions (Q&A)

1. What if my variances are unequal?

Use Welch’s t-test, which adjusts degrees of freedom. Most software does this automatically if you specify.

2. Can I use this test with small sample sizes?

Yes, but check assumptions carefully. The t-test is fairly robust, but normality is more critical with small data sets.

3. What is the difference between independent and paired t-tests?

- Independent t-test compares means of two unrelated groups.

- Paired t-test compares means from the same group at two time points or matched pairs.

4. How do I interpret a non-significant result?

It means you do not have enough evidence to say the two population means differ, but it does not prove they are equal.

5. Can I test more than two groups with independent t-tests?

No, use ANOVA for comparing multiple groups to avoid increasing Type I error.

6. Do I need to check assumptions before performing the t-test?

Yes, violations may lead to inaccurate results. Test for normality and variance equality before proceeding.

7. What is the effect size for an independent t-test?

Commonly, Cohen’s d is used, calculated by dividing the mean difference by pooled standard deviation. It gives a standardized measure of difference magnitude.