In the world of statistics and data analysis, understanding relationships between variables is central to drawing meaningful conclusions. Whether you’re analyzing the impact of study time on exam scores, advertising spend on sales, or temperature on crop yield, one concept consistently plays a pivotal role: the explanatory variable.

Also known as the independent variable, the explanatory variable is the factor that is believed to influence or explain changes in another variable. It serves as the starting point in analytical models, helping researchers and analysts uncover patterns, test hypotheses, and make predictions.

In this blog, we’ll break down what an explanatory variable is, how it works, where it is used, and why it is essential in both academic research and real-world decision-making.

What Is an Explanatory Variable?

An explanatory variable is a variable that is used to explain variations in a response variable (also called the dependent variable). It is the input or predictor that potentially causes or influences an outcome. It influences or accounts for variation in a response variable, serving as the input in regression models or experiments. Researchers manipulate or observe it to assess its impact, such as testing how fertilizer levels affect crop yield, where fertilizer acts as the explanatory variable. For example:

- Study hours → Exam scores

- Advertising budget → Sales revenue

- Temperature → Ice cream sales

In each case, the first variable is the explanatory variable, while the second is the outcome being explained. Unlike purely independent variables unaffected by others, explanatory variables may correlate with confounders but still explain outcomes. They contrast with response variables, which measure effects, like yield in the crop example.

Role Explanatory Variable in Regression Analysis

In linear regression, explanatory variables appear on the right side of the equation:

where is explanatory and y is response variable. Coefficients quantify its effect size and direction.

Multiple regression incorporates several explanatory variables, such as age, income, and education predicting house prices. Model selection techniques like stepwise regression identify the most relevant ones.

Identifying Explanatory Variable

Start by framing the research question: “Does X cause or predict Y?” X becomes explanatory. In observational studies, use domain knowledge to select candidates like smoking status explaining lung cancer risk.

Check for linearity, multicollinearity (high correlation among explanatory variables), and relevance via scatterplots or correlation matrices. Transform variables (e.g., log) if nonlinear. Explanatory variables can take different forms depending on the context:

- Continuous Variables: e.g., age, income, temperature

- Categorical Variables: e.g., gender, education level, region

- Binary Variables: e.g., yes/no, pass/fail

Each type requires different handling in statistical models.

Real-World Examples

- Medicine: Drug dosage (explanatory) predicts patient recovery time (response).

- Economics: Interest rates explain GDP growth.

- Education: Study hours predict exam scores.

- Marketing: Ad spend forecasts sales revenue.

These span quantitative (hours) and categorical (treatment type) forms.

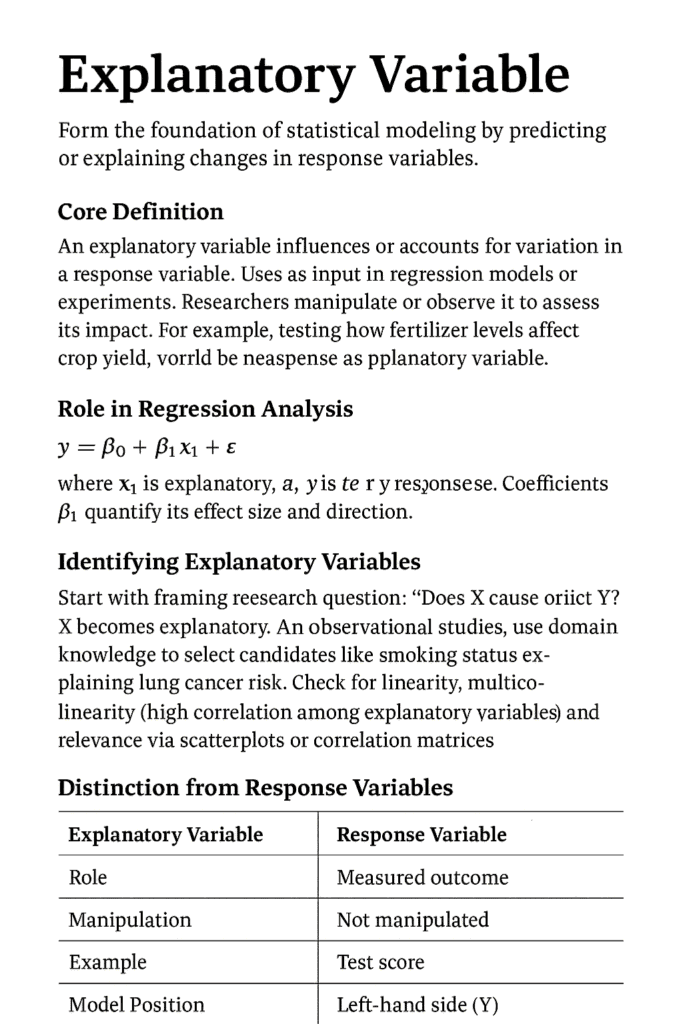

Distinction from Response Variables

Common Pitfalls

While powerful, explanatory variables must be used carefully:

- Confounding Variables: Hidden variables that affect both explanatory and response variables.

- Correlation vs Causation: Confusing correlation with causation leads to spurious explanatory variables, like ice cream sales “explaining” drownings due to summer confounding. Control via randomization or covariates.

- Omitted variable bias: Omitted variable bias occurs when key predictors are ignored, inflating others’ effects. Always test model fit with R-squared and residuals.

- Overfitting: Overfitting from too many explanatory variables reduces generalizability; use cross-validation.

- Multicollinearity: When multiple explanatory variables are highly correlated with each other.

Ignoring these issues can lead to misleading conclusions.

Advanced Applications

In machine learning, explanatory variables feed algorithms like random forests for prediction. Feature importance rankings highlight top influencers.

Causal inference extends this via instrumental variables, isolating true effects amid endogeneity. Time-series models treat lagged values as explanatory.

Logistic regression adapts for binary responses, like predictors of disease presence.

Conclusion

The explanatory variable is a fundamental concept that underpins nearly all forms of statistical analysis. By helping to explain changes in outcomes, it allows researchers to uncover relationships, test theories, and make informed predictions. Explanatory variables drive insight by linking causes to effects in statistical analysis. Mastering their selection and validation unlocks powerful predictive models across disciplines.

From simple comparisons to complex models, understanding how explanatory variables function and how to use them correctly can significantly improve the quality of analysis. Whether you’re a student, researcher, or data professional, mastering this concept is a critical step toward making sense of data in a structured and meaningful way. Data Science blog

Q&A

Q: How do explanatory variables differ from control variables?

A: Controls held constant to isolate effects; explanatory variables deliberately varied to test impact.

Q: Can categorical variables be explanatory?

A: Yes, via dummy coding (e.g., region as 0/1 variables) in regression.

Q: What’s the best way to select them?

A: Use theory, correlation analysis, and methods like LASSO for sparsity.

Q: Do they prove causation?

A: No—association only; experiments or causal methods needed for proof.

Q: How many are ideal in a model?

A: Balance with sample size (10-20 observations per variable) to avoid overfitting