In the realm of research and data analysis, statistical tests serve as indispensable tools for drawing meaningful conclusions from observed data. They provide a rigorous framework for evaluating hypotheses, identifying relationships, and making predictions, moving beyond mere descriptive summaries to inferential insights about larger populations. The key to unlocking the power of statistical analysis lies in selecting the appropriate test for a given research question and data structure. An incorrect choice can lead to erroneous conclusions, misinterpretation of results, and ultimately, flawed research findings.

Statistical tests are used to test hypotheses relating to either the difference between two or more samples/groups, or the relationship between two or more variables.

What is a statistical test?

Statistical tests assume a null hypothesis. Depending on what you are testing, the null hypothesis is that there is no difference between the samples or groups, or that there is no relationship between the variables being tested. A statistical test aims to either accept or reject the null hypothesis. Sometimes, statisticians refer to the alternative hypothesis, which is that there is a difference between the samples or groups, or that there is a relationship between the variables.

What does a statistical test do?

A statistical test will often have two key outputs – a test statistic and a p-value. The test statistic is a single number that represents how closely the distribution of your data matches the distribution predicted under the null hypothesis. Put another way, it represents how much the difference between samples, or the relationship between variables, in your test differs from the null hypothesis of ‘no difference’ or ‘no relationship’. It is important to ensure you choose the right statistical test because different tests assume different types of distribution of the data.

The p-value is an estimation of the probability of observing the test statistic under conditions where the null hypothesis is true. The smaller the p-value, the less likely the test statistic is to have occurred under the null hypothesis of the statistical test. Statisticians often define a significance level for the p-value, called alpha, below which the null hypothesis is rejected. This is commonly defines as 0.05 but could also be higher or lower depending on the context.

Some statistical tests will also calculate the confidence interval, which is the range of likely values of the test statistic at the chosen alpha level. For example, consider a two-tailed test measuring the mean difference between two samples. If the test statistic is 1.0 and the 95% confidence level is 0.8 to 1.2, this means that at the 5% significance level (alpha), you can be 95% confident that the true mean difference falls somewhere between 0.8 and 1.2.

Steps of Choosing the Correct Statistical Test

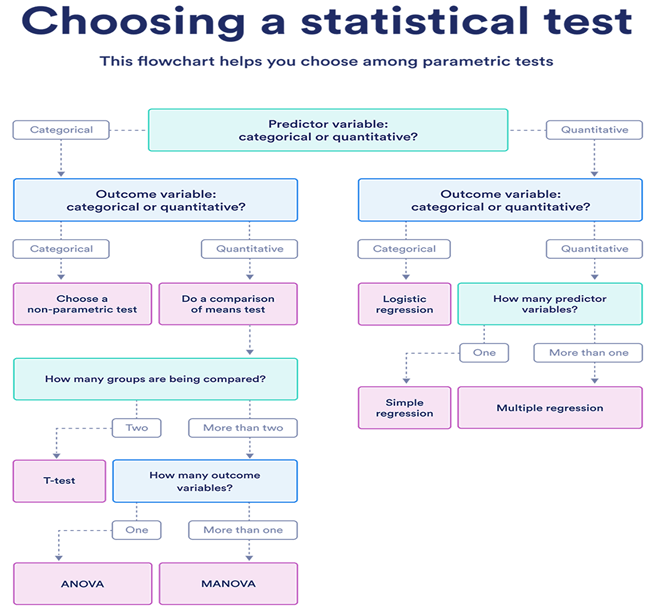

Choosing the correct statistical test is fundamental for valid research outcomes, and the provided flowchart offers a clear pathway. The process begins by identifying your Predictor Variable (Independent Variable), which is the factor you believe influences another variable. If your predictor is Categorical (e.g., gender, treatment group), you’ll follow one branch; if it’s Quantitative (e.g., age, income), you’ll follow another.

Next, you assess your Outcome Variable (or Dependent Variable, DV), which is what you are measuring or observing.

When your Predictor is Categorical:

- If your Outcome is also Categorical (e.g., yes/no response), you generally opt for a non-parametric test like a Chi-Square test, which examines associations between categories.

- If your Outcome is Quantitative (e.g., test scores, blood pressure), you’re looking to compare means between groups. Here, the number of groups matters:

- For Two groups, a T-test (Independent Samples T-test for different groups, Paired Samples T-test for related groups) is appropriate.

- For More than two groups, you then consider the number of outcome variables:

- With One outcome variable, ANOVA (Analysis of Variance) is used to compare means across multiple groups.

- With More than one outcome variable, MANOVA (Multivariate Analysis of Variance) allows for the comparison of means of multiple DVs across groups.

When your Predictor is Quantitative:

- If your Outcome is Categorical, Logistic Regression is the go-to test, predicting the probability of a categorical outcome based on quantitative predictors.

- If your Outcome is Quantitative, you’re exploring predictive relationships:

- With One predictor variable, Simple Regression models the linear relationship between the single predictor and the outcome.

- With More than one predictor variable, Multiple Regression extends this to include several predictors in predicting the outcome variable.

Statistical assumptions

Statistical tests make some common assumptions about the data they are testing:

- Independence of observations (no autocorrelation): The observations/variables you include in your test are not related (for example, multiple measurements of a single test subject are not independent, while measurements of multiple different test subjects are independent).

- Homogeneity of variance: the variance within each group being compared is similar among all groups. If one group has much more variation than others, it will limit the test’s effectiveness.

- Normality of data: the data follows a normal distribution (a.k.a. a bell curve). This assumption applies only to quantitative data.

Why can’t you accept a null hypothesis?

It might seem logical that a statistical test can have one of two outcomes – the null hypothesis accepted or rejected. Most statisticians and researchers, however, will say you can either reject the null hypothesis or fail to reject the null hypothesis. This might seem pedantic, but a failure to reject the null hypothesis implies that the data are not sufficiently persuasive for us to prefer the alternative hypothesis over the null hypothesis.

If the p-value is less than your alpha, then the null hypothesis can be rejected. That means a difference or relationship exists. If the p-value is greater than your alpha, the null hypothesis cannot be rejected.

One-tailed or two-tailed?

In a two-tailed statistical test, the null hypothesis is that there is ‘no difference’ between samples or ‘no relationship’ between variables. And the alternate hypothesis is that there is a difference or relationship. In such a scenario, the difference or relationship might be positive or negative. In a two-tailed test, half of your alpha is allocated to testing the statistical significance in one direction, and the other half is allocated to testing the statistical significance in the other direction.

Sometimes, you may have a prior belief that the difference or relationship is in one particular direction. For example, the difference is larger than zero but not smaller. In such a scenario, a one-tailed test might be appropriate, in which all of your alpha is allocated to testing the statistical significance in one direction.

What statistical test should I choose?

Which statistical test to choose will depend on several factors. The type of variables you have (interval, ordinal or nominal), the distribution, and structure of your data.

Choosing a parametric test: regression, comparison, or correlation

Parametric tests usually have stricter requirements than nonparametric tests, and are able to make stronger inferences from the data. They can only be conducted with data that adheres to the common assumptions of statistical tests.

The most common types of parametric tests include regression tests, comparison tests, and correlation tests.

Regression tests

Regression tests look for cause-and-effect relationships. They can be used to estimate the effect of one or more continuous variables on another variable.

Comparison tests

Comparison tests look for differences among group means. They can be used to test the effect of a categorical variable on the mean value of some other characteristic.

T-tests are used when comparing the means of precisely two groups. For example, the average height of two groups of people. ANOVA and MANOVA tests are used when comparing the means of more than two groups. For example, the average heights of children, teenagers, and adults.

Correlation tests

Correlation tests check whether variables are related without hypothesizing a cause-and-effect relationship.

These can be used to test whether two variables are autocorrelated.

Choosing a nonparametric test

Non-parametric tests don’t make as many assumptions about the data. And these are useful when one or more of the common statistical assumptions are violated. However, the inferences they make aren’t as strong as with parametric tests. There are some popular non-parametric tests:

Conclusion

Selecting the correct statistical test is not merely a procedural step but a foundational element of sound research methodology. It directly impacts the validity and reliability of findings, enabling accurate interpretations and robust conclusions. Adhering to this systematic guide empowers researchers to move beyond arbitrary choices, fostering a deeper understanding of their data and strengthening the credibility of their scientific contributions across diverse fields. Data Science Blog